The Tableau REST API has very convenient endpoints for retrieving a Workbook or View Preview Image. Unfortunately, there are many conditions where Tableau Server itself doesn’t generate anything other than the gray “User Filtered View” thumbnail. This post describes a process for using the full Image (not preview) REST API endpoints to generate attractive thumbnails images.

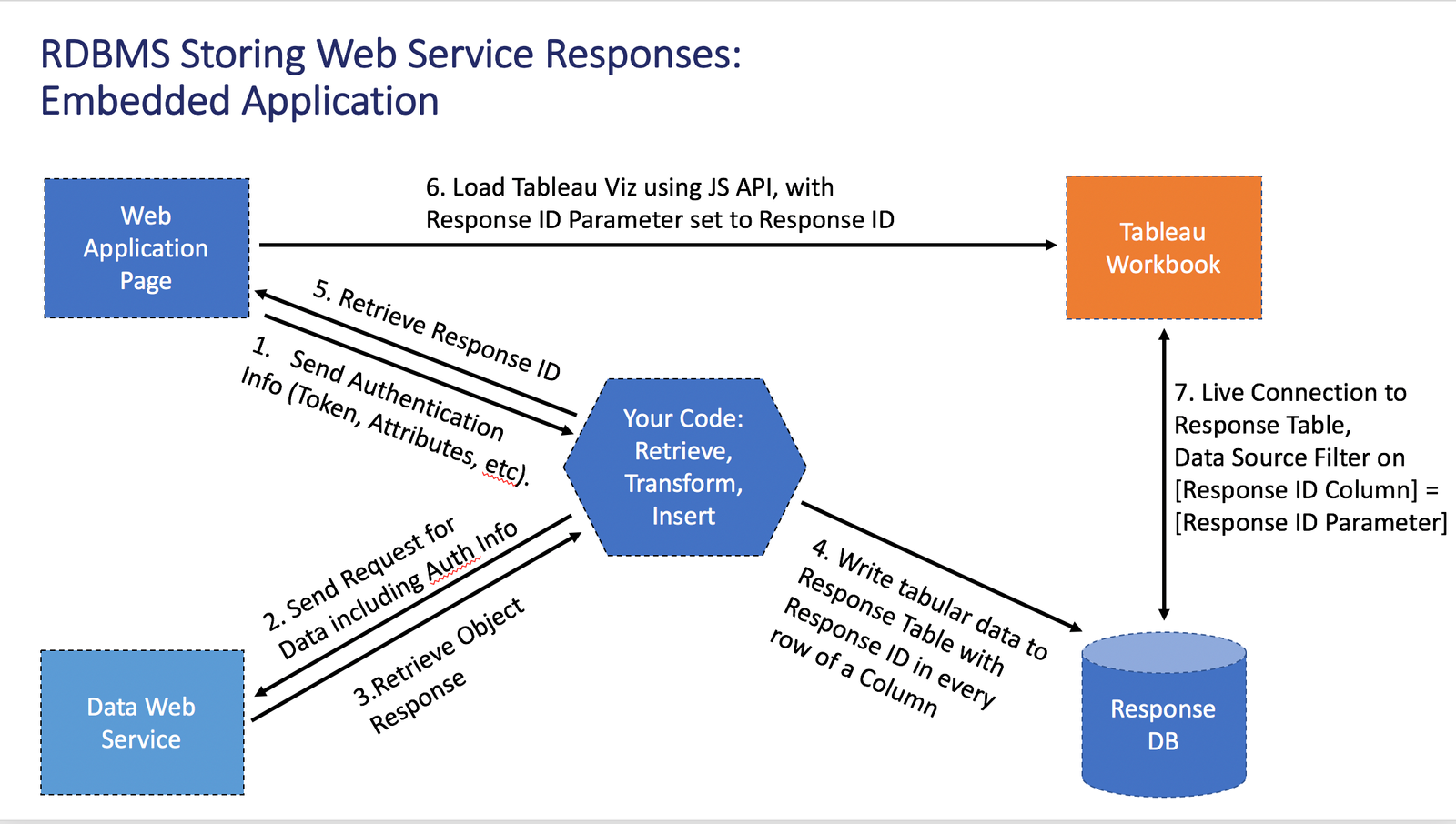

One of the conditions that results in a gray generic thumbnails is any workbook that is connected to a Published Data Source that has Row Level Security set up through Data Source Filters.

The basic workflow is:

- Request all of the Views from a Workbook (or the Default View if you only need thumbnails at the Workbook level) for one particular user, who you know has enough data access to result in a good looking thumbnail

- Call the actual Image endpoints (instead of the Thumbnail / Preview endpoints) for every View you need

- Reduce the images to thumbnail size

- Cache the thumbnails for efficient retrieval by your embedded web application