Row Level Security is User-Based Automatic Data Filtering. When a user views a Workbook or Data Source in Tableau Desktop or Server, they only see the rows of data they are entitled to. This is separate from Permissions, which handle if someone can view / interact / etc. with the Workbook or Data Source.

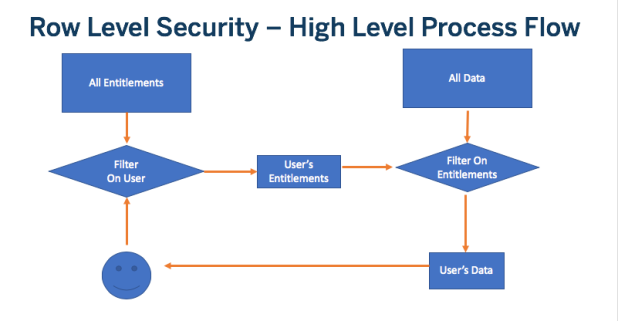

Regardless of how it is implemented, Row Level Security follows this workflow:

- User is identified

- The set of Data Entitlements for the User is retrieved from all possible Data Entitlements

- The Data is filtered by that set of Data Entitlements

- The filtered data is returned to the end user

Unbreakable Security Filters in Tableau

A user-based security filter in Tableau is only fully secured by the following process:

- Create a Calculated Field in Tableau Desktop utilizing a User Function , or a Parameter (see Securing Tableau Parameters)

- Create a Data Source Filter on the Calculated Field in Desktop

- Publish the Data Source to Tableau Server

The majority of secure techniques for achieving Row Level Security in Tableau Server depend on each user having a distinct username in Tableau Server and that the user be logged in as that username. Syncing usernames (and groups) can be achieved via Active Directory, LDAP (on Linux) or from any source using the Tableau Server REST API.

Tableau Desktop has a “Create User Filter” option in the Server menu, but it is completely manual and hard-coded. For dynamic row level security that ties into a database or a security service, you’ll need to connect the user information to the data that exists somehow.

Standard Row Level Security (Entitlements in Database Tables)

There are several methods for implementing Row Level Security using a Live Connection. The vast majority of them are based on the concept of using the User Functions in Tableau to match against a column in a database, so that the resulting rows limit the data that can be seen.

The quick overview to get you started with the best practices in a practical way is: Quick Explanation of Row Level Security in Tableau

To learn all of the details of the standard method of Row Level Security:

Tableau’s Row Level Security Whitepaper.

Very similar to the whitepaper, this blog post has been around longer and covers most of the same topics in almost the same way:

How to set up your Database for Row Level Security in Tableau

Tableau can take advantage of certain RDBMS systems which have their own facilities for Row Level Security. Examples of this would be using Impersonate / Run As for Microsoft SQL Server, or Initial SQL to use VPD in Oracle.

Stored Procedures as a Data Source

When using Stored Procedures (in the RDBMSs that are supported by Tableau), you are faced with dilemma: Tableau can link Tableau Parameters (but not the User Functions) to Stored Procedure Parameter values, but Tableau Parameters can be changed by an end user via the URL or JavaScript and thus are not secure.

You have a few options:

- If the Stored Procedure returns a Table (is a Table Value Function), you can access it using the Custom SQL dialog in Tableau. This is covered as part of building a security decoder function

- Using Initial SQL to Pass Usernames to Stored Procedures or Views in SQL Server

If you want to pass the security entitlements into a workbook at load time, with no entitlements stored in the database, you must take care to build a Parameter + Data Source Filter combination that is secure:

Passing a JWT as a Tableau Parameter

Securely Passing Parameters into a Tableau Viz at Load Time

Extract Row Level Security

2018.3 and Later Versions

Starting in Tableau 2018.3, the Hyper extract engine has been updated with an option to bring in Multiple Tables, specifically with the intention of making Row Level Security easier to implement. Using Multiple Tables can vastly reduce the time it takes to generate an extract, by completely avoiding the “row duplication blow-up”.

Read more about how it works and how to implement it here:

Multiple Table (Normalized) Hyper Extracts

If you have a use case where you need more control over building the Extracts than the Tableau Desktop dialogs allow, you may want to use the Hyper API to generate the extracts themselves. When you do, however, you’ll want to finish off the process by inserting the newly generated Hyper files into a TDSX or TWBX for publishing to Tableau Server:

The Missing Link – Putting a Hyper File from the Hyper API into a TDSX or TWBX File

Prior to 2018.3 or when using “Single Table” extracts in 2018.3+

Tableau Extracts take whatever table relationships from the Live Connection screen and combine them into a single table through one query, the results of which are transformed in a single table in the Extract file. This process is technically called “denormalization”, and often results in the number of rows multiplying, which is called, very technically, “blowing up”.

To implement Row Level Security without your data “blowing up”, you should use the CONTAINS() method from Part Two of the blog posts below:

- Defusing Row Level Security in Your Extracts (Before They Blow Up) Part Two

- Defusing Row Level Security in Tableau Data Extracts (Before They Blow Up) Part 1

One other element that should also be taken into account is that if you have multiple different clients, you may want to make separate extracts for each of them, so that the Extract itself only has the appropriate data for each client. Instructions for this type of template Data Source creation and maintenance are contained in:

Multi-Tenant Data Environments: Templates and Other Solutions

Token Authentication / Authorization

Many organizations have moved to having their authentication and authorization come from another service which generates auth tokens, which include entitlement information through along with the user.

It is always recommended that you still log the user into Tableau using an SSO method (if you are embedding) or a secure method like Kerberos if they are on your local domain.

If your database is set up to use auth tokens, there are two mechanisms by which you could get the tokens into the database, the first of which is probably more efficient:

Passing a JWT as a Tableau Parameter

Using Initial SQL to Pass Usernames to Stored Procedures or Views in SQL Server

Parameters or Attribute Based Security

Frequently people want to pass in some type of “parameters” to set certain attributes for data security filtering. You cannot do this securely using Tableau’s Parameters, because the user or the JS page can reset their values at any time. The following is the best architecture we know of for achieving this, although it is quite a bit of work:

Attribute-Based Row Level Security in Tableau, a Working Technique

This post describes why Tableau Parameters are not secure and what it would take to make them “trustworthy” for use as data security filtering:

Securely Passing Parameters into a Tableau Viz at Load Time

Web Services / RESTful data

While Tableau provides the Web Data Connector framework for accessing web services / REST APIs, it is built around a single-user authentication framework — in essence, whoever authenticated at publish time to Tableau Server is the only user who a WDC can authenticate as. There is no API for changing those credentials or publishing variations, so it doesn’t really scale up.

If you need a “Live” Connection to a Web Service / REST API as your datasource, the solution provided here is free / repeatable / and scalable:

Web Services / REST APIs as a Data Source in Tableau: A PostgreSQL/Python solution

It is based on the architectures originally described here:

“Live” Web Services Connections in Tableau

If the RESTful web service calls are generated from simple Stored Procedures in a Databases, you can instead just connect directly to those Stored Procedures, using some of the techniques from earlier in this article to pass in the authorization parameters.

You can also extract out larger subsets of data than a per user basis and then use the Extracts Row Level Security techniques from above to achieve the filtering. Depending on the size of your data, that may be the easiest technique to get the best performance. If doing this, I would recommend the Hyper API over the Web Data Connector, as you can offload the extract generation onto another machine and build in your variations directly into your generation program. Building a Flexible Extract Generator using the Extract API describes the necessary pieces of functionality to give your end users a way to define and receive arbitrary Extracts.